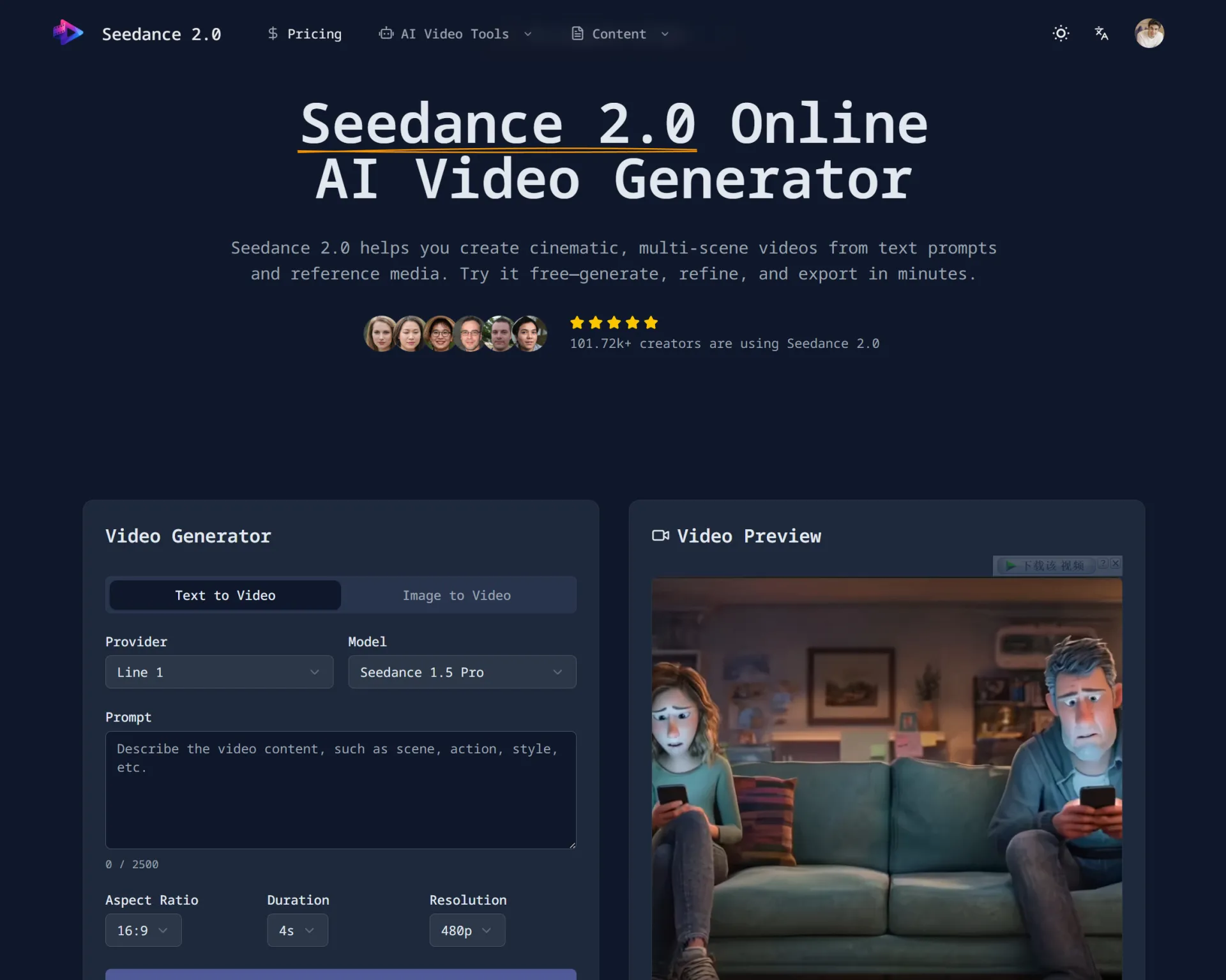

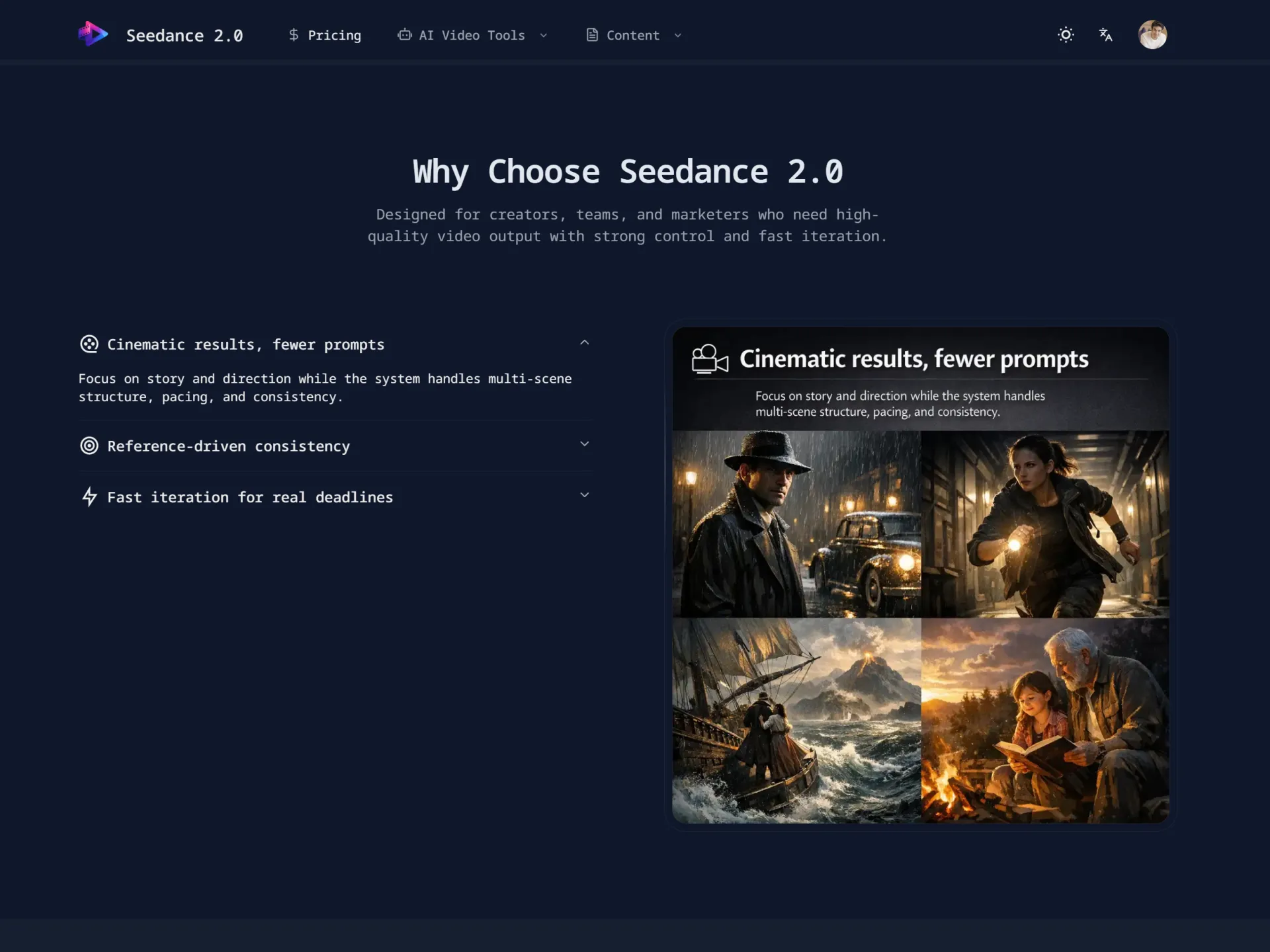

Built a Cinematic AI Short Using Seedance 2.0 – Here’s What I Learned

Over the past few weeks, I’ve been experimenting with AI video generation tools, and I recently built a short cinematic scene using Seedance 2.0. I wanted to share what I created and what I learned during the process.

🎬 What I Built

The project was a 20-second cinematic scene:

A slow drone shot over a futuristic city at sunset, with soft atmospheric fog, glowing windows, and smooth camera motion.

My goal was to test:

- Motion consistency

- Lighting realism

- Scene coherence

- Prompt responsiveness

🛠 How I Built It

Here’s my workflow:

- Prompt Design

- I started with a basic prompt but quickly realized specificity matters a lot.

- Instead of:

“Futuristic city at sunset”

- I refined it to:

“Ultra-realistic futuristic skyline at golden hour, cinematic drone movement, volumetric lighting, soft fog, depth of field, 4K, high detail.”

- The added camera and lighting instructions made a big difference.

- Iteration

- The first generation had minor motion flickering.

- I adjusted:

- Camera speed description

- Removed conflicting style keywords

- Simplified the environment details

- Cleaner prompts gave more stable output.

- Scene Refinement

- I ran multiple generations and selected the most stable version, then lightly edited pacing externally (no heavy post-production).

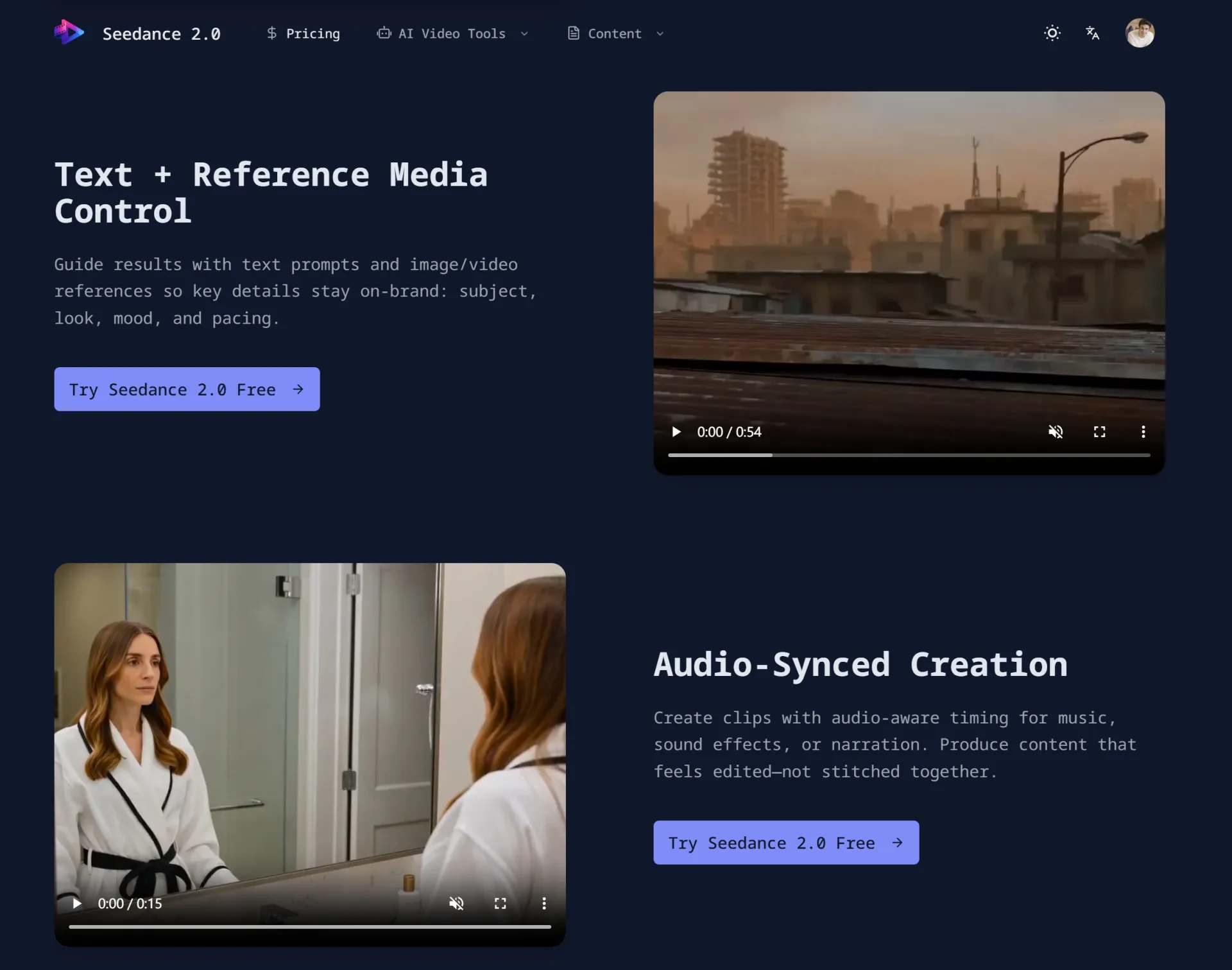

🔎 What Worked Well

- Camera simulation felt surprisingly natural

- Lighting transitions were smooth

- Scene depth and perspective looked cinematic

- Generation time was relatively fast

Compared to earlier AI video models I’ve tested, motion consistency has improved noticeably.

⚠ What Was Challenging

- Overloading prompts reduced stability

- Too many stylistic instructions caused visual noise

- Character-heavy scenes are still harder than environment shots

Prompt clarity > Prompt complexity.

📚 Key Takeaways

If you’re experimenting with AI video tools:

- Be extremely specific about camera movement

- Keep prompts structured (Scene → Lighting → Camera → Quality)

- Remove redundant style phrases

- Iterate in small changes instead of rewriting everything

AI video generation feels closer to “directing” than “editing.”

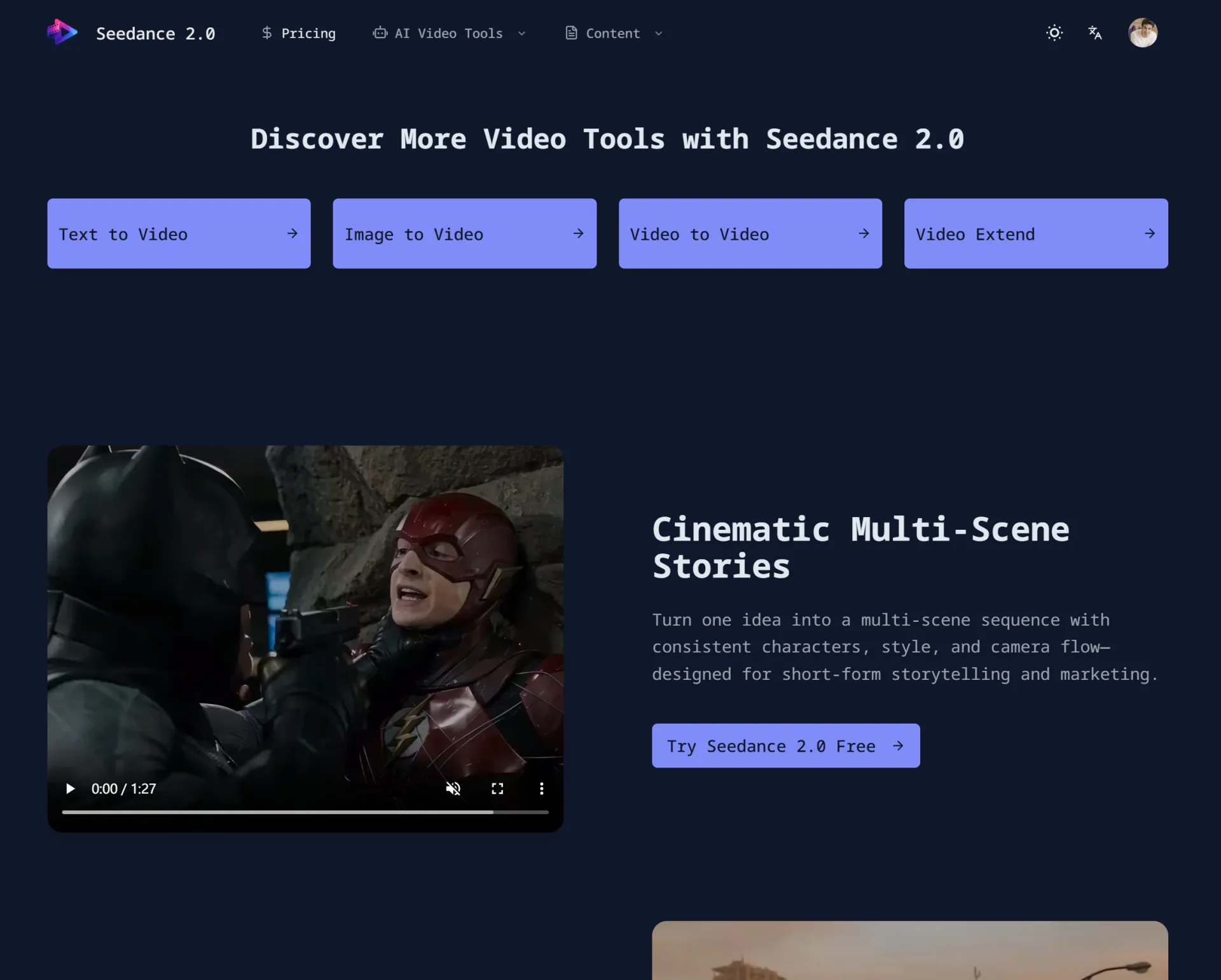

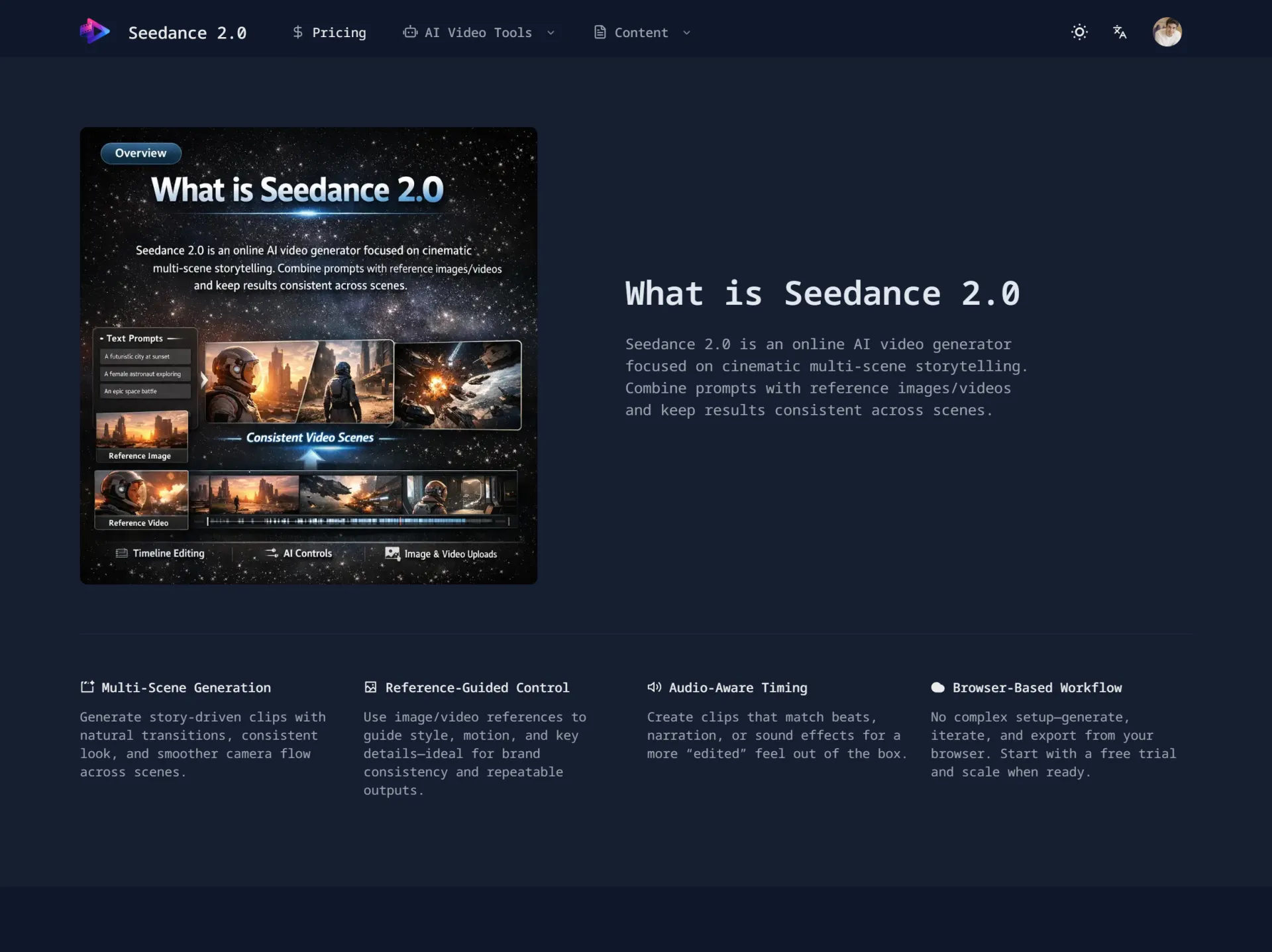

💡 Why I’m Sharing This

I’m interested in exploring how AI tools change creative workflows.

Instead of replacing traditional filmmaking, I see tools like Seedance 2.0 as rapid prototyping engines — especially useful for concept visualization.

Would love to hear how others are using AI video tools:

- Are you focusing on storytelling?

- Advertising?

- Visual experiments?

- Pre-visualization for larger projects?

Looking forward to learning from the community.

No comments yet. Be the first to comment!